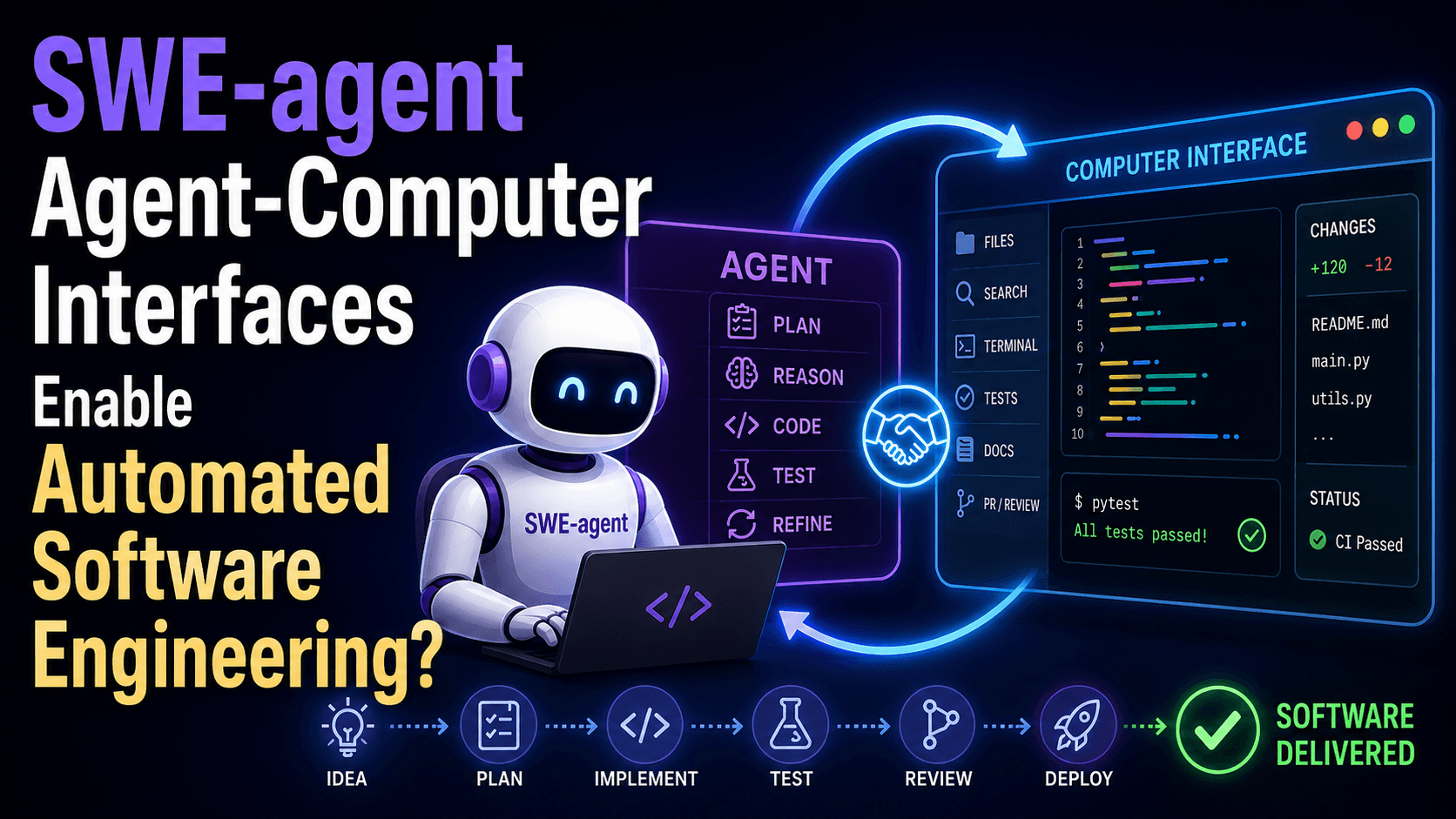

Paper Summary: SWE-agent Agent-Computer Interfaces Enable Automated Software Engineering?

This is a Claude-generated summary!

PAPER X-RAY

║ Title : SWE-agent: Agent-Computer ║

║ Interfaces Enable Automated ║

║ Software Engineering ║

║ Institution: Princeton University ║

║ Year : 2024 (NeurIPS 2024) ║

║ TL;DR : LM agents perform much better ║

║ at real GitHub issue-fixing ║

║ when given a custom interface ║

║ (ACI) instead of a raw shell. ║

║ Core Claim : A carefully designed ║

║ agent-computer interface (ACI) ║

║ boosts resolve rate by 64% ║

║ over the shell-only baseline ║

║ on SWE-bench, without changing ║

║ the underlying model at all. ║

║ Beats SOTA : Yes — 12.47% vs 3.8% on ║

║ SWE-bench full test set ║

║ Code Open? : Yes — swe-agent.com ║

Should you care? If you're building coding agents or any agent that needs to interact with a filesystem or codebase, this paper directly tells you how to design your tool interface — and shows that doing so matters more than many assume. The ACI framing is a clean abstraction worth stealing immediately.

Phase 2 — Section-by-Section Breakdown

Section 1 — Introduction

What They're Saying

The core setup: LM agents are increasingly being used for complex software engineering tasks. But so far, they've been given raw Linux shell access — and they struggle with it. The paper's thesis is that LMs are a new kind of "user" and deserve a purpose-built interface, just like humans got IDEs instead of raw terminal commands.

Think of it like this: you wouldn't hand a new hire a terminal and say "good luck fixing that bug in a 50k-line repo." You'd give them VS Code, search tools, syntax highlighting, and git blame. SWE-agent is the LM equivalent of that.

Why It Matters

The previous best non-interactive approach (RAG + direct patch generation) solved 3.8% of SWE-bench. SWE-agent (same underlying GPT-4 Turbo, different interface) solves 12.47%. That's a 3.3× improvement from better UX alone, with zero model fine-tuning.

Section 2 — The Agent-Computer Interface (ACI) Concept

What They're Saying

The ACI is an abstraction layer between the LM and the computer. The analogy they draw:

Human → UI (VSCode, PyCharm) → Computer

LM → ACI → Computer

Humans benefit from interfaces tailored to human cognition. LMs have different constraints: they can't see GUIs, every token in context costs compute, and distracting context actually hurts their performance.

Four ACI Design Principles (distilled from trial and error):

| Principle | What It Means | Bad Example |

|---|---|---|

| Simple, easy actions | Few options per command, clear docs | grep with 30 flags |

| Compact & efficient | One action = meaningful progress | Need 5 shell ops to do one edit |

| Informative but concise feedback | Show what changed, not everything | cat dumping 1,000 lines |

| Guardrails | Block/warn on mistakes automatically | Silent bad edits with sed |

Why It Matters

These four principles are the real intellectual contribution. They aren't obvious from just "use a shell" — they came from manually watching agents fail in specific ways.

Section 3 — SWE-agent: The Actual System

Architecture Breakdown

ARCHITECTURE

────────────────────────────────────────────────

Issue Description

│

▼

LM Agent (GPT-4 Turbo / Claude 3 Opus)

│

▼ [generates: Thought + Command]

ACI Layer ←───────────────────────────┐

│ │

├── Search/Navigation │

│ find_file │

│ search_file │

│ search_dir (≤50 results) │

│ │

├── File Viewer │

│ open, scroll_up/down │

│ goto <line> │

│ 100-line window │

│ │

├── File Editor │

│ edit <start>:<end> <text> │

│ → linter check │

│ → auto-update view │

│ │

└── Context Manager │

collapse old observations │

keep last 5 full obs │

error dedup │

│ │

▼ │

Linux Shell execution │

│ │

▼ │

Feedback (concise, structured) ───────┘

│

▼ (repeat until 'submit')

Final patch applied to repo

Novel components (not in standard shell agents):

The

edit <start>:<end>command — multi-line in-place edit with one callThe linter-gated editing (bad edits are rejected before applying)

Observation collapsing (only last 5 observations shown in full)

Search result capping at 50 (agents told to refine if exceeded)

Standard components: Built on Linux shell, uses ReAct-style (Thought → Action → Observation loop).

The edit Command in Detail

edit <start_line>:<end_line>

<replacement text>

end_of_edit

What this does:

Replaces lines

start_linethroughend_linein the currently open fileRuns Python linter on the result

If linting fails: rejects the edit, shows the agent the error + what the edit would have looked like, asks it to try again

If linting passes: applies the edit, auto-shows the updated file view

Python equivalent:

def edit_file(filepath, start_line, end_line, new_text):

with open(filepath, 'r') as f:

lines = f.readlines()

new_lines = lines[:start_line-1] + [new_text + '\n'] + lines[end_line:]

# Linting check

import ast, py_compile, tempfile, os

with tempfile.NamedTemporaryFile(mode='w', suffix='.py', delete=False) as tmp:

tmp.writelines(new_lines)

tmp_path = tmp.name

try:

py_compile.compile(tmp_path, doraise=True)

except py_compile.PyCompileError as e:

os.unlink(tmp_path)

return f"Syntax error: {e}\nEdit NOT applied."

finally:

os.unlink(tmp_path)

with open(filepath, 'w') as f:

f.writelines(new_lines)

return show_file_window(filepath, start_line) # return updated view

Gotcha: The linter only catches major syntax errors (E999 level). Logic bugs get through. The agent still needs to run tests to catch semantic errors.

Observation Collapsing

Old observations (everything before the last 5) are collapsed to a single-line summary. This prevents the LM's context window from filling up with stale file contents. Think of it as a sliding context window over the agent's action history.

Section 4 — Experimental Setup

Datasets:

SWE-bench full (2,294 tasks from 12 real Python repos, GitHub issues → patches)

SWE-bench Lite (300 canonical, self-contained bug fix instances — used for ablations)

HumanEvalFix (short-form code debugging, 3 languages)

Models: GPT-4 Turbo (128k context) and Claude 3 Opus (200k context). Smaller models (Llama 3, DeepSeek Coder) were tried but context window or capability limitations made them non-viable.

Baselines:

RAG: BM25 retrieval + direct patch generation (no interaction)

Shell-only: LM interacting with raw Linux shell (no ACI)

Metric: % Resolved (pass@1) — all unit tests pass after patch. Also tracks $ Avg. Cost per resolved instance.

Budget: $4/instance hard cap. If hit, current edits are submitted as-is.

Phase 4 — Results

Main Results

Model | SWE-bench Full | Cost | SWE-bench Lite | Cost

-----------------------|-----------------|---------|-----------------|------

RAG w/ GPT-4 Turbo | 1.31% | \(0.13 | 2.67% | \)0.13

RAG w/ Claude 3 Opus | 3.79% | \(0.25 | 4.33% | \)0.25

Shell-only (GPT-4) | — | — | 11.00% | $1.46

SWE-agent (GPT-4) | 12.47% | \(1.59 | 18.00% | \)1.67

SWE-agent (Opus) | 10.46% | \(2.59 | 13.00% | \)2.18

HumanEvalFix:

Model | Python | JS | Java

------------------------------|--------|-------|------

SWE-agent w/ GPT-4 Turbo | 87.7% | 89.7% | 87.9%

GPT-4 (non-interactive) | 47.0% | 48.2% | 50.0%

WaveCoder-DS 6.7B | 57.9% | 52.4% | 57.3%

The HumanEvalFix gap is massive — interactive editing with feedback nearly doubles pass rates over direct generation.

Ablation Study (SWE-bench Lite)

Component Ablated | % Resolved | Drop

---------------------------|------------|------

Full SWE-agent | 18.0% | —

edit w/o linting | 15.0% | ↓3.0

No edit (bash only) | 10.3% | ↓7.7

Summarized search (ours) | 18.0% | —

Iterative search | 12.0% | ↓6.0

No search | 15.7% | ↓2.3

100-line window (ours) | 18.0% | —

30-line window | 14.3% | ↓3.7

Full file view | 12.7% | ↓5.3

Last 5 obs (ours) | 18.0% | —

Full history | 15.0% | ↓3.0

w/o demo | 16.3% | ↓1.7

Standout ablation findings:

Iterative search is worse than no extra search at all (12.0% vs 15.7%). Agents exhaustively call

nextuntil they've seen every match — burning context and budget. The lesson: LM-friendly ≠ human-friendly. Iterative search is great for humans because we can stop when we've found what we need. LMs tend to be compulsive explorers.Full file view hurts more than a 30-line window. Too much context degrades performance — LMs get lost in irrelevant code.

Linting is worth 3 points. Without it, cascading bad edits are common.

⚠️ Red Flags / Limitations

12.47% on SWE-bench full is impressive vs prior SOTA, but still leaves ~87.5% unsolved. This is hard territory.

Ablations are only on GPT-4 Turbo. It's not confirmed the same ACI design choices are optimal for Claude or open-source models.

The $4/instance budget cap means some failures are budget-limited rather than capability-limited. The paper acknowledges this but the sensitivity analysis suggests raising the budget wouldn't help much.

Only Python repos evaluated (SWE-bench is Python-only).

✅ Green Flags

Ablations are thorough and logically structured — each component tested independently.

Multiple seeds for variance analysis (pass@k over 6 runs shown in Figure 4).

Failure mode analysis via automated LM labeling with 87% human-agreement validation.

Code open-sourced.

Phase 3 — Agent Behavior Analysis

How Agents Actually Solve Problems

From trajectory analysis of the 286 resolved instances on SWE-bench full:

Phase 1 — Localization (Turns 1–5):

Agents start with

find_file/search_dir(broad), then zoom tosearch_file/goto(specific)Or: write a reproduction script (

create→edit→python) to surface the bug

Phase 2 — Edit-Execute Loop (Turns 5+):

Alternating

editandpythondominates the middle and late turnsAgents interleave

search_file/scrollfor additional context as needed

Success vs Failure patterns:

Resolved instances: median 12 steps, $1.21 cost

Unresolved instances: mean 21 steps, $2.52 cost

"Agents succeed quickly and fail slowly" — if it's not solved by turn ~15, it probably won't be

Failure distribution (n=248 unresolved on Lite):

Incorrect Implementation ~40%

Overly Specific Implementation ~12%

Cascading Failed Edits ~23%

Failed to Find Relevant File ~2.4%

Failed to Recover from Edit ~4.8%

Gave Up Prematurely ~2.4%

Can't Reproduce ~12.9%

Ran Out of Time ~2.0%

The biggest failure class is just wrong fix — the agent finds the right file and makes an edit, but the logic is incorrect. This is fundamentally a reasoning problem, not an interface problem, suggesting ACI improvements have a ceiling.

Phase 5 — Practical Takeaways

1. Can I use this today? Yes. The code is open-source at swe-agent.com. You can run it against your own GitHub issues. The system uses the Anthropic/OpenAI API.

2. What's worth stealing? Three things, ranked by ROI:

The

edit <start>:<end>+ linter pattern: Replace file-level writes with line-range edits that auto-validate. Implement this if you're building any file-editing agent.Observation collapsing: Keep only the last N full observations; collapse older ones to 1-line summaries. Prevents context flood.

Capped search results with refinement guidance: Don't dump 200 grep results. Cap at 50, and tell the agent to narrow the query if exceeded.

3. What does it replace? In a coding agent stack: raw shell tool calls for editing and search. Specifically replaces sed, grep piped directly to the LM, and unstructured cat.

4. Compute cost: ~\(1.59/resolved instance with GPT-4 Turbo. At 18% resolution rate on Lite, that's ~\)8.80 per attempted instance (many fail). For production use on real codebases, costs stack up fast.

5. Limitations they glossed over:

Python-only (SWE-bench is Python). How well does this ACI transfer to TypeScript, Go, or polyglot repos? Not tested.

The linter is Python-specific (

py_compile). You'd need language-aware linting for other stacks.The 12.47% ceiling suggests the remaining failures are mostly reasoning failures, not interface failures — ACI alone won't get you to 50%.

No multi-file edit support in a single command — complex refactors still require many sequential edits.

Phase 6 — The "So What" Summary

🔑 Core innovation: The ACI abstraction — treat LM agents as a distinct category of computer user, and design interfaces specifically for their strengths/weaknesses (no GUI, token budget constraints, tendency to over-explore)

⚡ Performance gain: 3.3× improvement over best non-interactive RAG baseline on SWE-bench; 64% relative gain over shell-only agent — all from interface design, zero model changes

🛠️ How to use it: Clone swe-agent, point it at a GitHub issue + repo, run with GPT-4 or Claude. Or steal the

edit <start>:<end>+ linter + observation-collapsing patterns into your own agent framework⚠️ Watch out for: ~87% of SWE-bench issues still unsolved; the design was optimized for GPT-4 Turbo and may not transfer cleanly to other models; Python-only validation; the remaining failure modes are logic/reasoning problems that better ACI won't fix

📚 What to read next:

SWE-bench (Jimenez et al. 2023) — the benchmark this builds on

InterCode (Yang et al. 2023) — the shell-only agent baseline SWE-agent improves on

ReAct (Yao et al. 2022) — the Thought+Action loop SWE-agent uses at inference time