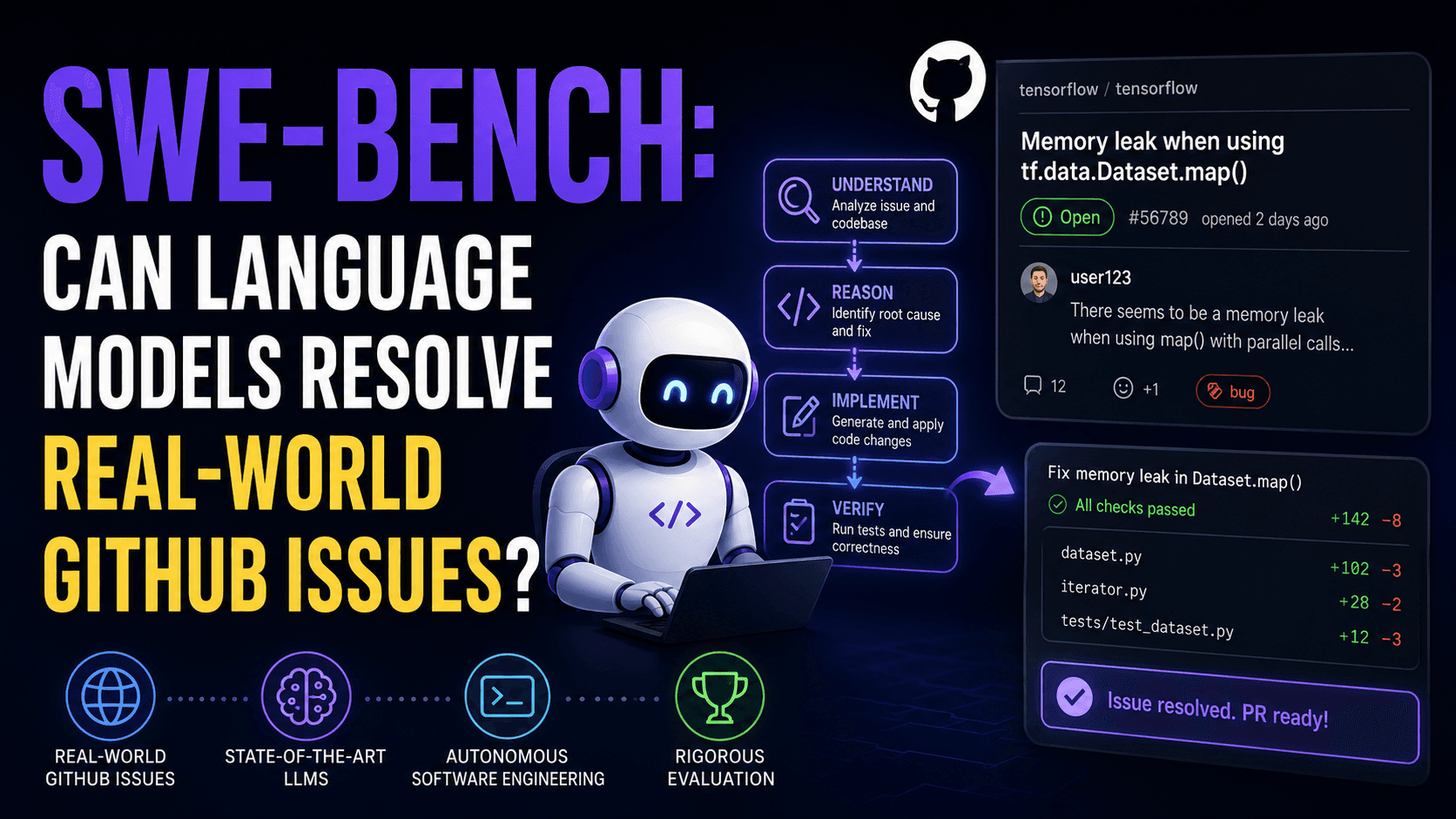

Paper Summary: SWE-BENCH CAN LANGUAGE MODELS RESOLVE REAL-WORLD GITHUB ISSUES?

Here's a full deep-dive on the SWE-bench paper:

PAPER X-RAY

║ Title : SWE-bench: Can Language Models ║

║ Resolve Real-World GitHub ║

║ Issues? ║

║ Institution: Princeton University / ║

║ University of Chicago ║

║ Year : 2024 (ICLR 2024) ║

║ TL;DR : A benchmark of 2,294 real ║

║ GitHub issues requiring LMs to ║

║ edit Python codebases. SOTA ║

║ models barely solve ~2–4% of ║

║ them. ║

║ Core Claim : Existing LMs fail at real-world ║

║ software engineering, and this ║

║ benchmark can track progress ║

║ toward more capable coding AIs ║

║ Beats SOTA : N/A — this is a benchmark ║

║ paper, not a model paper ║

║ Code Open? : Yes — swebench.com ║

Should you care? If you're building coding agents or evaluating LLMs on code tasks, SWE-bench is now the standard hard eval. HumanEval is saturated; this is what actually measures real software reasoning capability.

Phase 2 — Section-by-Section Breakdown

Section 1 — Introduction

What They're Saying

HumanEval and similar benchmarks ask models to write small self-contained functions from scratch. Real software engineering is nothing like that. Real bugs involve:

Navigating a large repo with thousands of files

Understanding how components interact across files

Making small, targeted edits rather than generating code from scratch

Verifying fixes via test suites

SWE-bench operationalizes this: given a real GitHub issue + a full repo snapshot, generate a patch that fixes it. Evaluation is execution-based — the patch must pass the original tests.

Why It Matters

Saturated benchmarks give false confidence. A model scoring 90% on HumanEval might be essentially useless in a real IDE. SWE-bench provides a signal that actually correlates with practical utility.

Section 2 — Benchmark Construction

What They're Saying

The pipeline has 3 stages to turn GitHub PRs into clean task instances:

Stage 1: Scrape PRs

- 12 popular Python repos on GitHub

- ~90,000 raw PRs collected

Stage 2: Attribute Filter

- Must be: Merged PR

- Must: resolve a GitHub issue

- Must: modify test files (proves a test was added)

→ Keeps "real" fixes with verifiable intent

Stage 3: Execution Filter

- Install the repo at the PR's base commit

- Apply test patch → run tests → log pre-results

- Apply solution patch → run tests → log post-results

- Keep only instances with ≥1 "fail-to-pass" test

→ Removes trivial/degenerate cases

After this pipeline: 90,000 PRs → 11,407 after attribute filter → 2,294 final instances.

Why It Matters

This is a careful construction. The "fail-to-pass" requirement is the key: it guarantees the task isn't already solved and that there's a concrete test that verifies the fix. The execution-based filter also removes issues with broken installs, noise, etc.

Task Formulation

Input to model: Issue text description + full codebase (as files)

Output expected: A

.patchfile specifying line-level diffsEvaluation: Apply the patch → run all associated tests → resolved if all FAIL_TO_PASS and PASS_TO_PASS tests pass

This is notable: the model must output a structured, machine-parseable diff format, not just free-form code.

Dataset Statistics (Key Numbers)

| Attribute | Mean | Max |

|---|---|---|

| Issue text (words) | 195 | 4,477 |

| Codebase files | 3,010 | 5,890 |

| Codebase lines | 438K | 886K |

| Lines edited in gold patch | 32.8 | 5,888 |

| Files edited | 1.7 | 31 |

| Fail-to-pass tests | 9.1 | 1,633 |

Median task: ~140-word issue, ~1,900 files, ~1 function edited in ~15 lines, ~1 fail-to-pass test.

SWE-bench Lite

A 300-instance subset sampled for more self-contained, functional bug fixes. Good for faster iteration. Covers 11/12 repos. Use this to prototype quickly before running the full eval.

Section 3 — SWE-Llama

What They're Saying

Off-the-shelf CodeLlama can't follow the patch-generation format at all — it outputs placeholders or random code. So they fine-tuned CodeLlama-Python 7B and 13B using LoRA on 19,000 issue-PR pairs from 37 repos (disjoint from eval repos to prevent contamination).

Training Details

Base model: CodeLlama-Python 7B / 13B

Adapter: LoRA (r=16, α=16, dropout=0.05)

applied to Q, K, V, O projection matrices

LR: 6e-4

Batch size: 32

Max epochs: 4

Max tokens: 30,000 (cuts corpus to ~10k effective instances)

Hardware: 4× A100 (7B, 20 hrs) | 8× A100 (13B, 47 hrs)

Tricks: DeepSpeed Ulysses + FlashAttention for long context

Training Data Difference

The training data doesn't require test changes in the PR — broader collection. This gives 19K pairs vs. the 2,294 strict eval instances.

Why It Matters

SWE-Llama shows you can teach a smaller open model to at least generate well-formatted patches. It's competitive with Claude 2 in some settings and runs on consumer hardware.

Section 4 — Experimental Setup

Retrieval Problem

The model can't fit 438K lines of code into any context window. So they need to select which files to include. Two strategies:

1. Sparse Retrieval (BM25)

Use BM25 to retrieve files most relevant to the issue text

Files are prepended with their path (to help filename-based retrieval)

Test 3 context limits: 13k, 27k, 50k tokens

Counterintuitively: shorter context windows perform better (models struggle with long irrelevant context)

2. Oracle Retrieval

Cheat: give the model exactly the files the gold patch edits

Unrealistic in practice, but useful to measure an upper bound

Even with perfect file selection, models still mostly fail

BM25 Recall vs. Oracle Files

| Context | Avg Recall | All Oracle | Any Oracle |

|---|---|---|---|

| 13k | 29.6% | 26.1% | 34.8% |

| 27k | 44.4% | 39.8% | 51.3% |

| 50k | 51.1% | 45.9% | 58.4% |

At 27k tokens, BM25 misses the right files entirely in ~50% of cases. This is a major bottleneck.

Models Evaluated

| Model | Max Tokens | % Instances Coverable |

|---|---|---|

| ChatGPT-3.5 (16k) | 16,385 | 58.1% |

| GPT-4 (32k) | 32,768 | 84.1% |

| Claude 2 | 100,000 | 96.4% |

| SWE-Llama | ≥100,000 | ≥94.8% |

Phase 4 — Experiments & Results

BM25 Results (Main Table)

| Model | SWE-bench % Resolved | Lite % Resolved |

|---|---|---|

| Claude 3 Opus | 3.79 | 4.33 |

| Claude 2 | 1.97 | 3.00 |

| GPT-4-turbo | 1.31 | 2.67 |

| SWE-Llama 13b | 0.70 | 1.00 |

| SWE-Llama 7b | 0.70 | 1.33 |

| ChatGPT-3.5 | 0.17 | 0.33 |

These numbers are brutal. The best model (Claude 3 Opus) solves fewer than 4% of real GitHub issues with a basic retriever. This is the point — the benchmark is deliberately hard.

Oracle Retrieval Results

| Model | % Resolved |

|---|---|

| Claude 2 | 4.80 |

| SWE-Llama 13b | 3.97 |

| SWE-Llama 7b | 3.01 |

| GPT-4 | 1.74 |

| ChatGPT-3.5 | 0.52 |

Even with perfect file selection, Claude 2 only hits 4.8%. GPT-4 actually drops relative to Claude 2 here — suggesting it's particularly bad at using long contexts.

Oracle-Collapsed (Tightest Context)

Only show lines ±15 from the actual edit:

| Model | % Resolved |

|---|---|

| Claude 3 Opus | 9.39 |

| Claude 2 | 5.93 |

| GPT-4 | 3.40 |

| ChatGPT-3.5 | 1.09 |

Performance nearly doubles when you remove all the surrounding code noise. The retrieval/localization problem is the #1 bottleneck, not raw code editing ability.

Key Analysis Findings

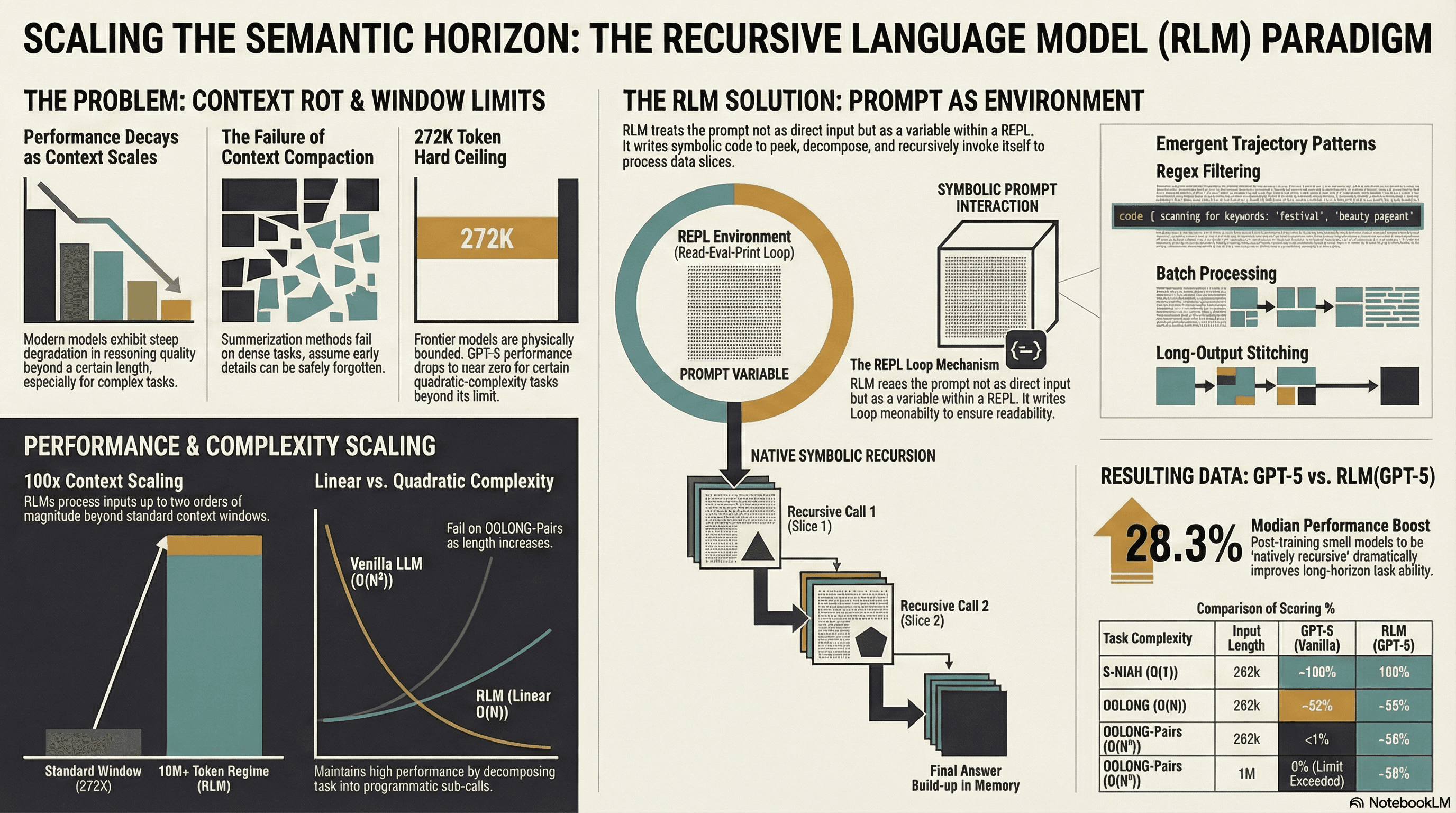

1. Context length kills performance. As total input tokens increase, resolution rate drops sharply. Models drown in irrelevant code.

2. Shorter edits are easier for models. Model patches average 17–30 total lines vs. gold patches at 39–74 lines. Models under-generate — they find the right location but don't make comprehensive enough changes.

3. Models generate "No-Op" patches most often. Breakdown of applied patches:

| Outcome | Claude 2 | ChatGPT-3.5 | SWE-Llama 13b |

|---|---|---|---|

| Resolved | 110 | 12 | 91 |

| Breaking Resolved | 26 | 2 | 10 |

| Partially Resolved | 15 | 4 | 10 |

| Work in Progress | 20 | 2 | 16 |

| No-Op | 471 | 174 | 672 |

| Regression | 436 | 90 | 397 |

Most patches either do nothing at all or actively break existing behavior. Genuinely partial solutions are rare.

4. No temporal leakage. Performance is roughly equal on pre-2023 vs. post-2023 issues, confirming models aren't "cheating" from memorized repo states.

5. SWE-Llama degrades under BM25. It was trained with oracle file context, so BM25's noisy context confuses it — it was trained to edit every file it sees.

6. Patch files > full file regeneration. Models asked to rewrite entire files performed worse than those generating diffs. Claude 2: 2.2% (full file) vs. 4.8% (patches).

Red Flags / Honest Assessment

❌ GPT-4 evaluated only on a 25% subset due to cost — results less reliable

❌ Only Python repos — limits generalizability

⚠️ BM25 is a weak retriever baseline; better agents (with search/grep tools) would change results substantially

✅ Execution-based eval is robust — no ambiguity about whether a fix works

✅ Temporal analysis is thorough and reassuring re: data contamination

✅ Multiple retrieval conditions tested

Phase 3 — Architecture / System Overview

ARCHITECTURE BREAKDOWN

──────────────────────────────────────────────────────

GitHub Issue

+

Codebase Snapshot (base commit)

│

▼

BM25 Retriever (or Oracle)

│ Selects top-k relevant files to fit context window

▼

Prompt Assembly

│ [Instructions] + [Issue Text] + [Retrieved Files]

│ + [Example .patch format]

▼

Language Model (Claude 2 / GPT-4 / SWE-Llama)

│

▼

Generated .patch file

│

▼

Evaluation Harness

├── git checkout base_commit

├── Install repo (conda env per version)

├── Apply test patch T

├── Apply model patch δ̂

├── Run test suite

└── Check: all FAIL_TO_PASS + PASS_TO_PASS pass?

│

▼

Resolved? Yes / No

Novel components vs. prior work:

Execution-based evaluation on real repos (vs. HumanEval's simple unit tests on self-contained functions)

Version-pinned conda environments per task instance (non-trivial engineering)

Fail-to-pass test tracking as the core success metric

Phase 5 — Practical Takeaways

1. Can I use this today? Yes. Dataset, evaluation harness, and leaderboard at swebench.com. There's also a pip-installable eval harness on GitHub.

2. What's worth stealing for your own work? The fail-to-pass test filtering approach. It's a clean way to construct task instances for any code-editing dataset: find changes where at least one test transitions from failing to passing. Applies to other languages/repos easily.

3. What does it replace? For evaluating coding agents or assistants on "real" tasks, SWE-bench should replace HumanEval or MBPP as your primary hard benchmark. Those are now too easy and don't reflect production-like scenarios.

4. Compute cost Running the eval harness is expensive — requires spinning up conda envs and running test suites per instance. For 2,294 instances, plan for significant infrastructure. That's why they offer SWE-bench Lite (300 instances) for iteration.

5. Limitations they underplayed

Only Python, only popular repos — skews toward well-maintained codebases. Real-world bugs often occur in messier code.

The retrieval baseline (BM25) is primitive. Modern agent systems with tool use, grep, AST search, etc. (like the subsequent SWE-agent paper) blow past these baselines.

Single-attempt evaluation (greedy decoding, Pass@1) — doesn't capture models that could solve problems with sampling.

No multimodal support — ~10% of matplotlib issues and 32% require understanding embedded images.

Phase 6 — The "So What" Summary

🔑 Core innovation: A benchmark constructed from real merged GitHub PRs, requiring execution-verified codebase edits at repo scale — far harder and more realistic than prior coding benchmarks

⚡ Performance gain: Not a model paper. Key finding: best models (Claude 3 Opus) solve only ~4% with BM25 retrieval, ~9% with perfect file selection. The gap between "knows code" and "fixes real bugs" is enormous.

🛠️ How to use it: Grab the harness from swebench.com; use SWE-bench Lite for fast evals; use full SWE-bench for serious capability measurement. Run your coding agent against it.

⚠️ Watch out for: Retrieval is the dominant bottleneck, not model capability per se. Don't interpret low scores as pure "model intelligence" failures — better file localization would substantially change rankings. Also, SWE-Llama is brittle to context distribution shift from its training setup.

📚 What to read next:

SWE-agent (Yang et al., 2024) — follows this work, achieves ~12% by giving Claude a proper shell/tool interface

Agentless (Xia et al., 2024) — achieves competitive results with a surprisingly simple, non-agent approach

LLM as a Software Engineer survey (Hou et al., 2023) — broader context for where SWE-bench sits in the field