Positional Encoding in Transformers

1. Overview

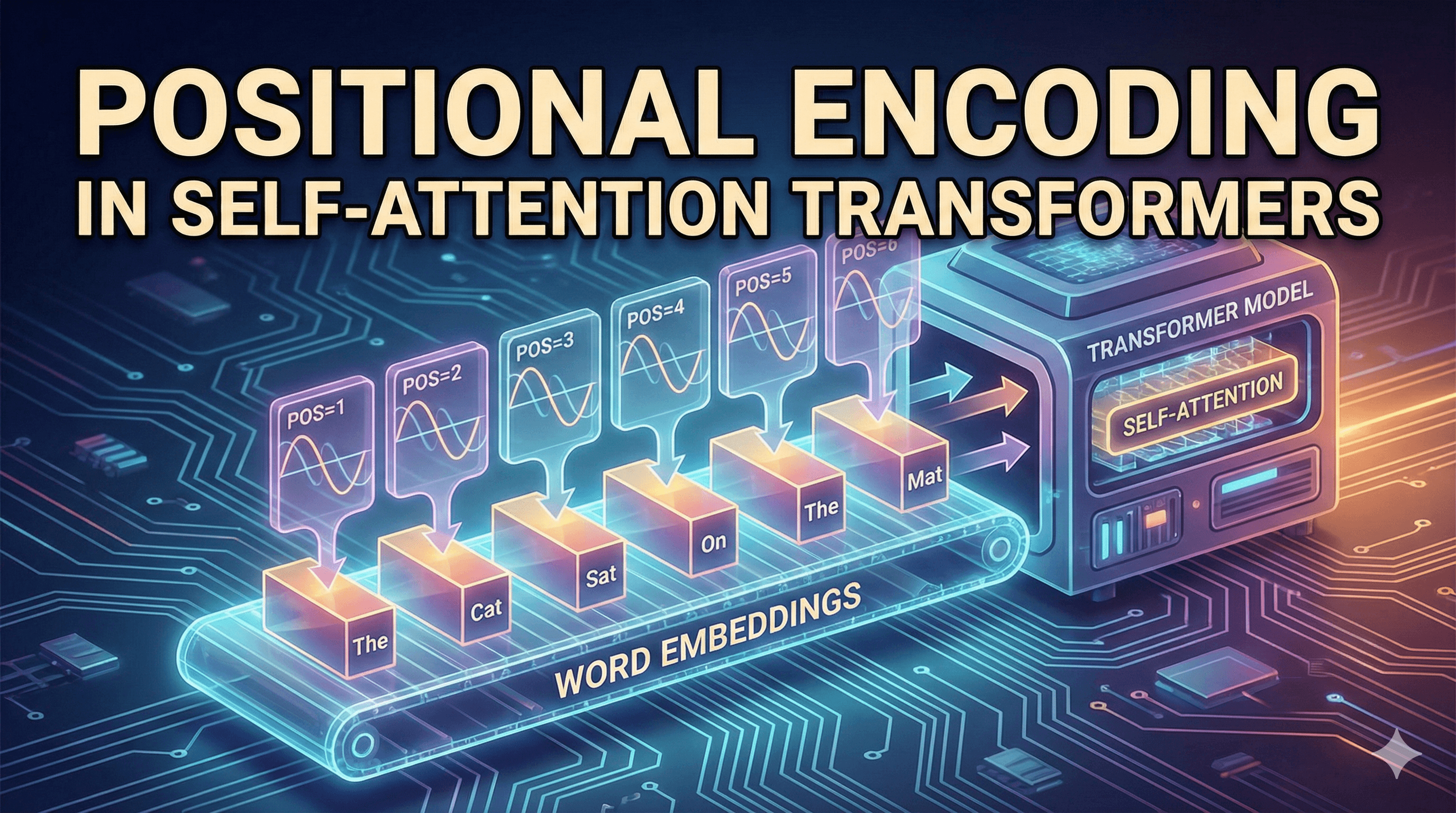

Positional Encoding is a technique used in Transformer architectures to encode the order of tokens in a sequence.

Transformers process tokens in parallel, unlike sequential models such as RNNs. Because of this, the model has no inherent understanding of token order. Positional encoding injects information about token positions into token embeddings before they enter the self-attention layers.

The final representation sent to the transformer is:

Input = TokenEmbedding + PositionalEncoding

This allows the model to learn both:

semantic meaning (from embeddings)

sequence order (from positional encoding)

2. Why This Exists

The Core Problem

Self-attention processes tokens simultaneously, not sequentially.

Example sentence:

"Nitish killed lion"

"Lion killed Nitish"

Both sentences contain the same tokens:

[Nitish, killed, lion]

If sent to self-attention simultaneously, the model cannot distinguish token order, so both sequences appear identical.

This is a fundamental limitation because word order determines meaning in natural language.

Why Previous Models Didn't Have This Problem

| Model | Order Awareness | Reason |

|---|---|---|

| RNN | Yes | Tokens processed sequentially |

| LSTM | Yes | Hidden state carries time information |

| Transformer | No | Tokens processed in parallel |

Transformers sacrifice sequential processing for parallel efficiency, so positional information must be explicitly added.

3. First Principles Explanation

To solve the ordering problem, we must encode position information alongside token embeddings.

Components

Token Embedding

Positional Encoding

Self-Attention Layer

Interaction

Token → Embedding Vector

Position → Positional Encoding Vector

Final Input = Embedding + Positional Encoding

Each token therefore carries:

semantic information + positional information

Design Requirements

A good positional encoding must satisfy:

- Bounded values

Neural networks train best with values in small ranges (e.g. -1 to 1).

- Continuous values

Neural networks prefer smooth functions, not discrete jumps.

- Ability to capture relative positions

The model should infer relationships like:

distance(token_i, token_j)

- Unique representation

Each position must have a distinct encoding.

4. How It Works

Step 1 — Tokenize Sentence

Example:

Sentence: "River Bank"

Tokens: [River, Bank]

Step 2 — Convert Tokens to Embeddings

Example:

River → embedding vector (d_model)

Bank → embedding vector (d_model)

Example dimension:

d_model = 512

Step 3 — Generate Positional Encoding

For position pos and dimension i:

PE(pos,2i) = sin(pos / 10000^(2i/d_model))

PE(pos,2i+1) = cos(pos / 10000^(2i/d_model))

Key ideas:

even dimensions → sine

odd dimensions → cosine

Step 4 — Add Positional Encoding to Embedding

InputVector = Embedding + PositionalEncoding

Step 5 — Send to Self-Attention

The resulting vector contains both:

semantic meaning + position

This vector becomes the input to the transformer encoder.

5. Example

Situation

Sentence:

"The lion runs"

Token positions:

The → position 0

lion → position 1

runs → position 2

Implementation Idea

Compute positional encodings:

PE(0)

PE(1)

PE(2)

Then combine:

Embedding(The) + PE(0)

Embedding(lion) + PE(1)

Embedding(runs) + PE(2)

Expected Outcome

The transformer can now learn relationships such as:

which word comes first

relative distances between words

syntactic dependencies

Summary

Transformers process tokens in parallel, losing order information.

Positional encoding injects token position information.

Encodings are generated using sine and cosine functions at multiple frequencies.

Positional vectors have the same dimension as embeddings.

Final input to transformers is:

embedding + positional_encoding